How to do a Twitter Sentiment Analysis?

Or: What´s the mood on Twitter?

Hello there!

Today I want to show you how to do a so-called Sentiment Analysis. It is about analyzing the mood on Twitter about a certain Keyword. You get a number of tweets which contain a keyword you can define, filter out the text of these tweets and then see if there are more positive or negative words. Of course you can´t just do it by hand; you need a tool doing the work for you.

Our tool:

Our main tool is called R. (yes just R, it´s not a typo)

It is a free “software environment for statistical computing and graphics” and is available for Unix platforms, Windows and MacOS.

It´s available here: http://www.r-project.org/

It has a comfortable installer, so this step shouldn´t be a problem.

After installing you can open the GUI and get the following screen:

Ok now we can download our other tool: twitteR

It´s a script written for R.

You don´t have to download it from a website, you can do it directly from within R.

You can to it with:

Install.packages(‘twitteR’, dependencies=T)

You then have to select a CRAN mirror, from where you want to download it and click ok. (you can show what ever mirror you want)

R will now download the package and install it.

Then we have to activate it for our current session with:

library(twitteR) library(plyr)

Your screen should look like this now:

Ok now we come to a tricky part:

The Twitter Authentification

Since Twitter released the Version 1.1 of their API a OAuth handshake is necessary for every request you do. So we have to verify our app.

First we need to create an app at Twitter.

Got to https://dev.twitter.com/ and log in with your Twitter Account.

Now you can see your Profile picture in the upper right corner and a drop-down menu. In this menu you can find “My Applications”.

Click on it and then on “Create new application”.

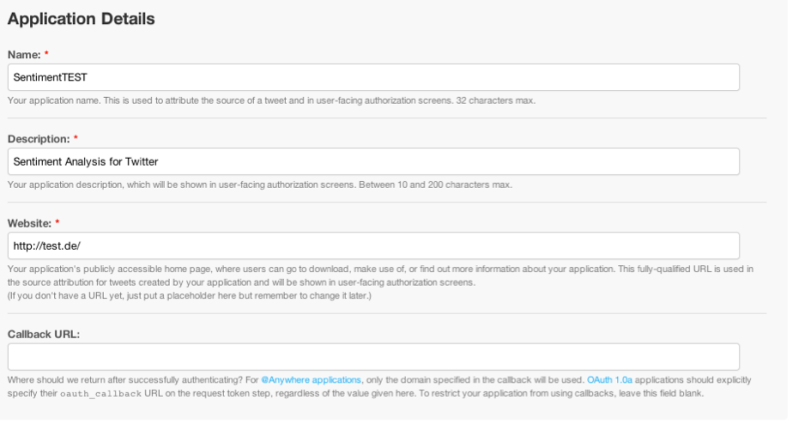

You can name your Application whatever you want and also set Description on whatever you want. Twitter requires a valid URL for the website, you can just type in http://test.de/ ; you won´t need it anymore.

And just leave the Callback URL blank.

Click on Create you´ll get redirected to a screen with all the OAuth setting of your new App. Just leave this window in the background; we´ll need it later

Continue to R and type in the following lines (on separate lines):

reqURL <- "https://api.twitter.com/oauth/request_token" accessURL <- "http://api.twitter.com/oauth/access_token" authURL <- "http://api.twitter.com/oauth/authorize" consumerKey <- "yourconsumerkey" consumerSecret <- "yourconsumersecret" twitCred <- OAuthFactory$new(consumerKey=consumerKey,consumerSecret=consumerSecret,requestURL=reqURL,accessURL=accessURL,authURL=authURL) download.file(url="http://curl.haxx.se/ca/cacert.pem", destfile="cacert.pem") twitCred$handshake(cainfo="cacert.pem") registerTwitterOAuth(twitCred)

You have to replace yourconsumerkey and yourconsumersecret with the data provided on your app page on Twitter, still opened in your webbrowser.

The command twitCred$handshake(cainfo=”cacert.pem”) will ask you to go a certain URL and entert he PIN you receive on this page.

The Data Mining:

Ok we passed the authentication and can now go on with getting the tweets we want from Twitter.

Type in:

tweets = searchTwitter("#apple", n=200, cainfo="cacert.pem")

This makes twitteR get 200 Tweets with the keyword #apple in it (you can change the keyword of course).

After waiting a few seconds you can use length(tweets) to see how many tweets were actually saved; maybe for some keywords the number existing is actual smaller than our sample size n.

Now we have our Tweets.

The Analyzing:

To be able to analyze our tweets, we have to extract their text and save it into the variable tweets.text by typing:

Tweets.text = laply(tweets,function(t)t$getText())

What we also need are our lists with the positive and the negative words.

We can find them here:

https://github.com/mjhea0/twitter-sentiment-analysis/tree/master/wordbanks

After downloading the ZIP you can put them in a folder on your Computer; you should just keep the absolute path in mind.

We now have to load the words in variables to use them by typing:

pos = scan('/Users/julian/Documents/positive-words.txt', what='character', comment.char=';')

neg = scan('/Users/julian/Documents/negative-words.txt', what='character', comment.char=';')

Of course you have to change the path, but we have our two lists: pos and neg

Now we have to insert a small algorhytm written by Jeffrey Breen analyzing our words.

Just copy-paste the following lines and hit enter:

score.sentiment = function(sentences, pos.words, neg.words, .progress='none')

{

require(plyr)

require(stringr)

# we got a vector of sentences. plyr will handle a list

# or a vector as an "l" for us

# we want a simple array ("a") of scores back, so we use

# "l" + "a" + "ply" = "laply":

scores = laply(sentences, function(sentence, pos.words, neg.words) {

# clean up sentences with R's regex-driven global substitute, gsub():

sentence = gsub('[[:punct:]]', '', sentence)

sentence = gsub('[[:cntrl:]]', '', sentence)

sentence = gsub('\\d+', '', sentence)

# and convert to lower case:

sentence = tolower(sentence)

# split into words. str_split is in the stringr package

word.list = str_split(sentence, '\\s+')

# sometimes a list() is one level of hierarchy too much

words = unlist(word.list)

# compare our words to the dictionaries of positive & negative terms

pos.matches = match(words, pos.words)

neg.matches = match(words, neg.words)

# match() returns the position of the matched term or NA

# we just want a TRUE/FALSE:

pos.matches = !is.na(pos.matches)

neg.matches = !is.na(neg.matches)

# and conveniently enough, TRUE/FALSE will be treated as 1/0 by sum():

score = sum(pos.matches) - sum(neg.matches)

return(score)

}, pos.words, neg.words, .progress=.progress )

scores.df = data.frame(score=scores, text=sentences)

return(scores.df)

}

The final steps:

Type in:

analysis = score.sentiment(Tweets.text, pos, neg)

Congrats, your first sentiment Analysis was now saved.

You can get a table by typing:

table(analysis$score)

Or the mean by typing:

mean(analysis$score)

Or get a histogram with:

hist(analysis$score)

The positive values stand for positive tweets and the negative values for negative tweets. The mean tells you about the overall mood of your sample.

Note:

Sometimes it doesn´t work because there are some tweets with invalid characters in it. Then you have to do the data mining again or change the keyword. As soon an update is available I will update this article.

Nice article! Succint yet useful. Good intro to R

Pingback: Create a wordcloud with your Twitter Data | julianhi's Blog

I seem to have a problem with the authetication.

I get the following error in R (using RStudio)

> twitCred$handshake(cainfo=”cacert.pem”)

To enable the connection, please direct your web browser to:

http://api.twitter.com/oauth/authorize?oauth_token=g6ivyRUEckJoGcniqV8yo2pR0qcYwwvsJjF7BibzU

When complete, record the PIN given to you and provide it here: 3204922

> registerTwitterOAuth(twitCred)

[1] TRUE

> tweets = searchTwitter(“#python”, n=200, cainfo=”cacert.pem”)

[1] “Unauthorized”

Error in twInterfaceObj$doAPICall(cmd, params, “GET”, …) :

Error: Unauthorized

Hm have you tried to do it with the “normal” R framework? I´m not a big fan of RStudio cause it sometimes produces strange error messages

Yup just tried it with the normal R console and get the same error.

tweets = searchTwitter(“#apple”, n=200, cainfo=”cacert.pem”)

Error in twInterfaceObj$doAPICall(cmd, params, “GET”, …) :

OAuth authentication is required with Twitter’s API v1.1

Hey. I have the exact same problem. Did you manage to get around it okay?

Hey! Did you try to execute the steps of the authentication process step by steps? Sometimes R doesn’t wait the needed time.

I did and i got passed it. My new favourite error that i don’t understand is this…! I’m close to shooting myself right now!!!

Loading required package: stringr

Error in FUN(X[[1L]], …) : could not find function “str_split”

In addition: Warning message:

In library(package, lib.loc = lib.loc, character.only = TRUE, logical.return = TRUE, :

there is no package called ‘stringr’

Please try to install the stringr package with install.packages(‘stringr’)

Hi, Its great! Thanks!

i got a problem with non-English letters, now searching how to remove it.

Could you help?

Hey Elizabeth

Nice to have you on my blog.

You can use the searchTwitter() function to get tweets containing just a certain language.

Like:

tweets = searchTwitter(“iPhone”, n=200, lang=”en”)

would just give you english tweets.

Hope I could help you. If you have further questions, i will be happy to answer.

nice, thanks!

Hi ,

Thanks for the blog. Actually I am trying to do sentiment analysis of telecom operators, but I get for every tweet there is some 15 duplicates. So, if I pull 1500 tweets, there are only 100 unique tweets. How to remove the duplicates in such cases.

Also, for tweets in languages other than English – is there a way to get them translated in English from twitter or should we do it after saving the tweets.

Hey Uthra,

nice to see you on my blog.

If everything is working correctly there you shouldn´t receive duplicates. Or better: if you get all the tweets with just one search, the Twitter API does not return duplicates. Please check your code if everything is correct.

And there is no way to get them translated directly from Twitter. You should save the tweets as you receive them and then think about translating them.

Please give me an answer if you could find the problem with the duplicates.

Regards

Julian

Hi

I am facing a Problem here with Rstudio.

after Completing the Authentication Process I am trying to get the Tweets but its showing some kind of error.

Athletics.list Athletics.df = twListToDF(Athletics.list)

Error in lapply(X = X, FUN = FUN, …) :

object ‘Athletics.list’ not found

> write.csv(Athletics.df, file=’C:/temp/AthleticsTweets.csv’, row.names=F)

Error in is.data.frame(x) : object ‘Athletics.df’ not found

Help me..

Hey Sourabh,

there seems to be a problem with the lists and dataframes you are using in your code and not with the Twitter Authentication.

Could you please show me your whole code?

Regards

Hi

Can we extract data from LinkedIn using R in the same way as we are able to get from TwitteR?

Hey Sourabh,

no you can´t because the LinkedIn API is structured completely than the Twitter API. LinkedIn focuses on contacts and

there is no way to search LinkedIn for public posts like you could do with Twitter.

I hope I could help you.

Regards

this is really nice article….

how can you interpret the score which is obtain in analysis?

Hey vishal,

it is really hard to interpret the score in a detailed way as this is just a very basic way of doing a sentiment analysis. You should better use an API like Viralheat or Datumbox for your analysis:

https://thinktostart.wordpress.com/2013/09/02/sentiment-analysis-on-twitter-with-viralheat-api/

https://thinktostart.wordpress.com/2013/09/09/sentiment-analysis-on-twitter-with-datumbox-api/

Regards

Hey Julian,

We had to change the accessURL <- "http://api.twitter.com/oauth/access_token" into "https://api.twitter.com/oauth/access_token" and authURL <- "http://api.twitter.com/oauth/authorize" into "https://api.twitter.com/oauth/authorize" in order to get it work.

Gr.

Frank

Hey Frank,

thanks for the hint! I will fix it.

Regards

Read all the comments, I still get this error:

> tweets = searchTwitter(“#apple”, n=200, cainfo=”cacert.pem”)

[1] “Unauthorized”

Error in twInterfaceObj$doAPICall(cmd, params, “GET”, …) :

Error: Unauthorized

Cant figure out a way. Can you help?

Hey,

I just updated the auth tutorial:

https://thinktostart.wordpress.com/2013/05/22/twitter-authentification-with-r/

Please refresh the page and see if it works with the new code.

Regards

I have gone completely bananas over the twitter authentication and PIN generation.

After executing the following command –

Cred$handshake(cainfo = system.file(“CurlSSL”,”cacert.pem”,package=”RCurl”))

OR

Cred$handshake(cainfo=”cacert.pem”)

I get this –

To enable the connection, please direct your web browser to:

http://api.twitter.com/oauth/authorize?oauth_token=V0W4WSrgKg7s336bMv6o2kCPmunzEToyW2UhnTCCcpM

When complete, record the PIN given to you and provide it here:

On redirecting, i get the “Authorize App” page. After that, i get either of the messages –

“The web page is not available” OR

“Could not connect to 127.0.0.1:8000/twitter_callback”

I have tried 4 different Callback URL’s –

1) 127.0.0.1:8000/twitter_callback

2) 127.0.0.1:8080/twitter_callback

3) 127.0.0.1:8000/twitter/oauth

4) 127.0.0.1:8080/twitter/oauth

Note – i have even tried the shortened versions of the URL’s mentioned above through Bitly

I have even tried changing the accessURL and authURL from http to https.

After struggling with it for days, I still see no signs of moving ahead.

Please guide me.

Hey Nitisch,

there is no need for providing a callback URL in the app setting. Just leave that field blank as you get redirected to a Twitter page automatically and you just have to copy paste the pin code.

But I will update the Twitter Auth post in a few minutes as the whole login process got much much more easier in the newest version of the twitteR package.

Regards

Hi Julianhi,

is it possible to remove tweets from the company while forming the corpus. I want to do this because most of the tweets from the company are not reflecting the sentiments of the consumers and hence would result in some noise. for eg- offers, new products etc do not add value to the sentiment.

please help it’s urgent for my project.

regards,

Abhishek

So you mean you want to check if the tweet message contains a certain keyword and if yes delete this tweet?

If so you can use the grepl function http://stat.ethz.ch/R-manual/R-devel/library/base/html/grep.html

Regards

ya, sort of. I mean, I want to remove tweets from the admin of that twitter account. so suppose apple is tweeting about anything on their account with #apple, I want to remove that. And I want to retain all those other tweets with #apple from other users.

hi,

Do you know any method to do the same(this is regarding the above question)? please reply. i also have another question, can i extract facebook comments also and add it to the tweets corpus to have a big set of data?

regards,

Hi Julianhi.

I know that I am too late, but could you explain me how this code works?

function(t)t$getText()

I understand “lapply” function, but I don’t found “getText” function.

Thanks in advance!!

Hey,

it is part of the status-class of the twitteR package. So the tweets are stored in a status-class which provides the function getText() to return the text of the tweets.

Regards